Text-to-video with waveform preview and narration loudness control

Pippit AI AVATER Use Cases

Create Engaging Training Videos

Transform dry training materials into dynamic video lessons. Pippit's AI avatars deliver your script with perfect clarity and consistent tone, saving hours of recording time. AI automation ensures high-quality, professional narration for every module, boosting learner engagement effortlessly.

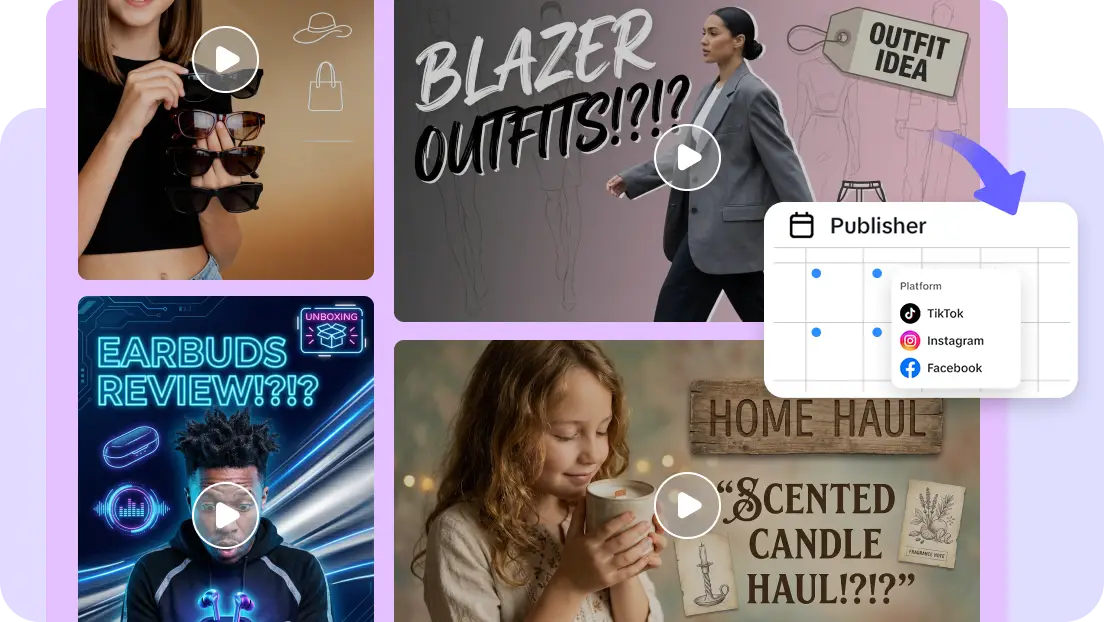

Scale Social Media Content

Generate compelling product explainers and social media ads in minutes. Simply input your text, and our AI avatars will create a polished video with studio-quality narration. This automated process allows you to produce content at scale, ensuring a consistent brand voice and high engagement.

Automate Corporate Announcements

Deliver internal updates and HR announcements with professional AI avatars. Convert your text-based memos into engaging videos instantly, ensuring your message is delivered clearly and consistently. Pippit's AI automates the entire process, saving resources while maintaining a high standard of quality.

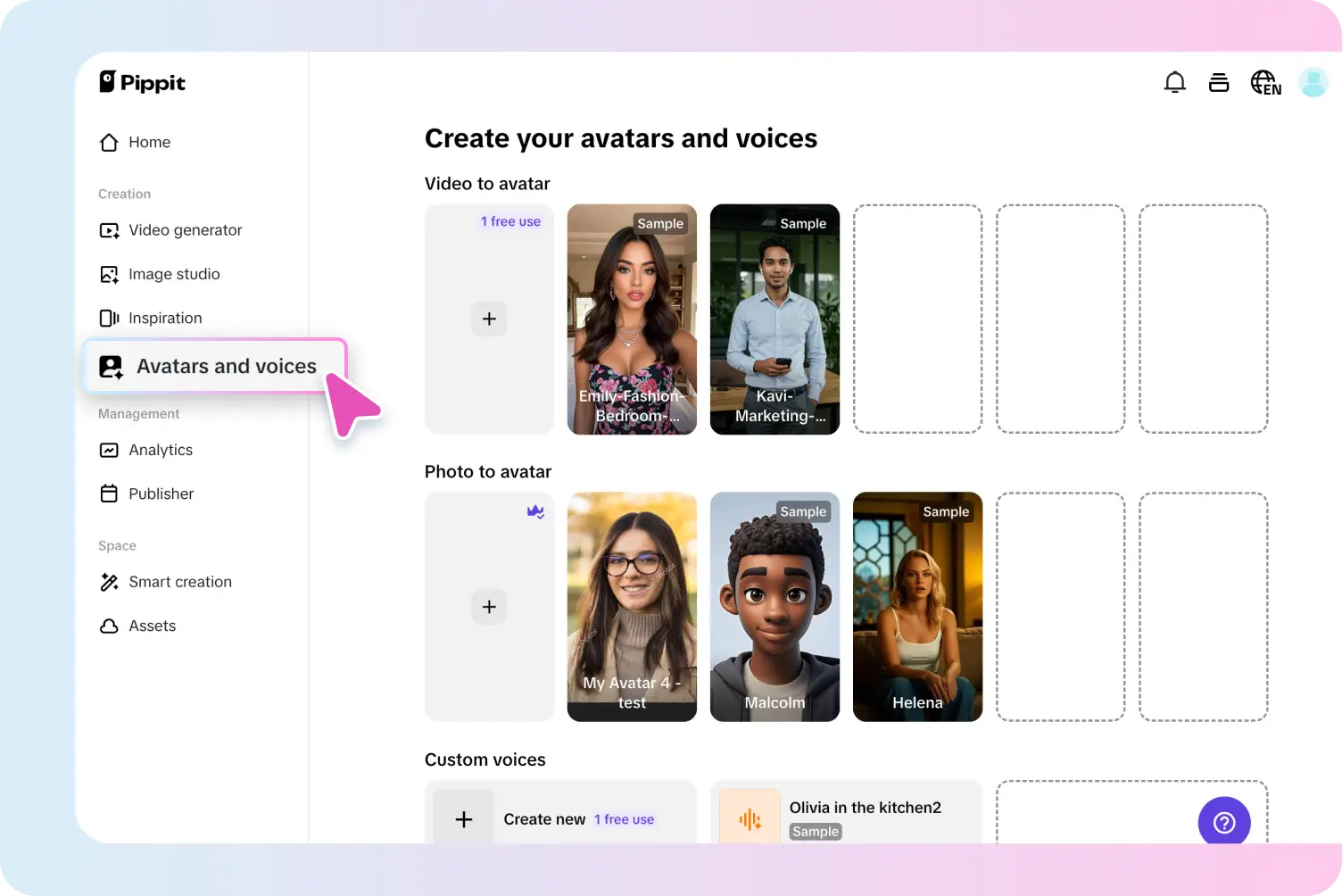

How to use Pippit's ai avater tool?

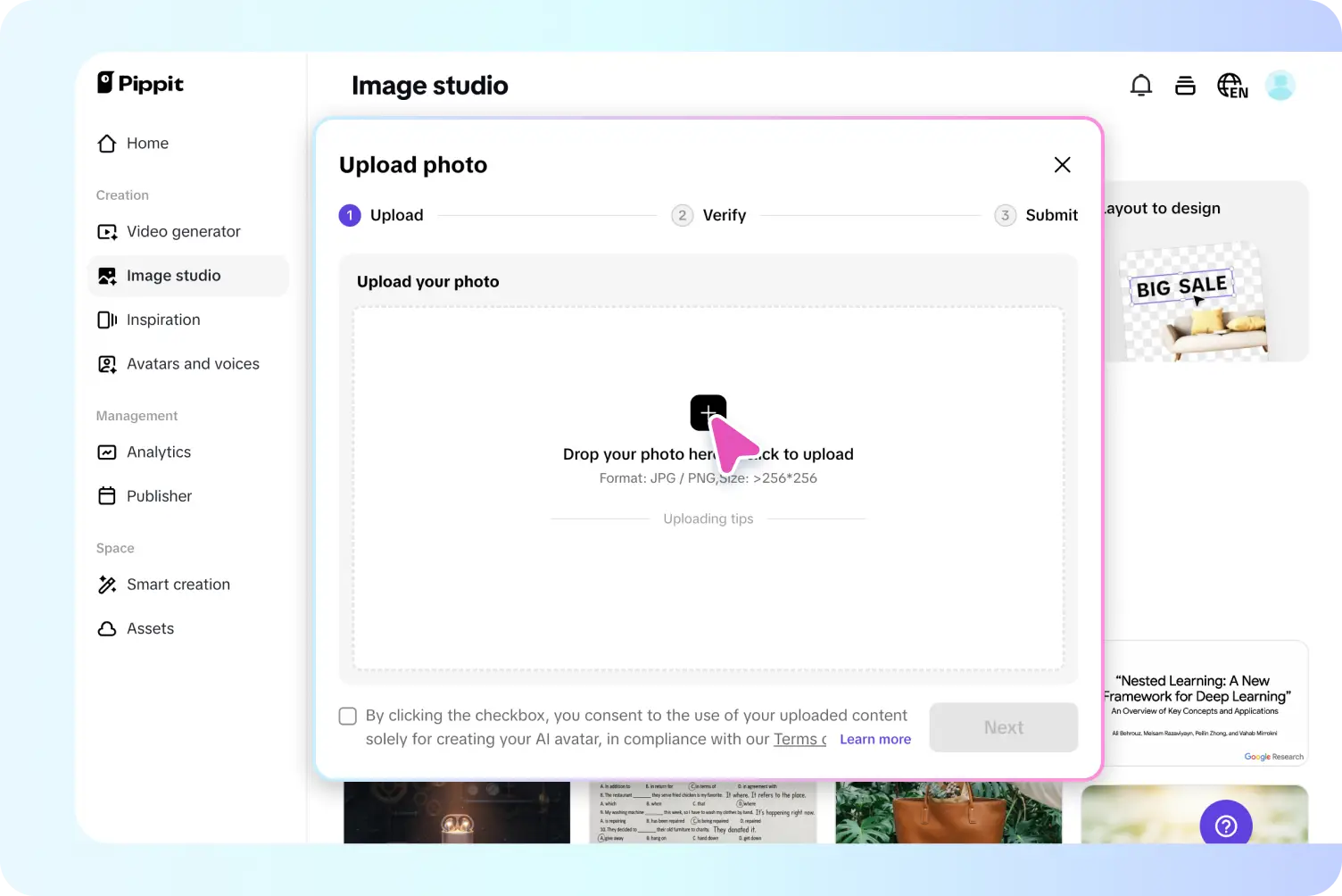

Step 1: Choose your AI avatar

Log in to Pippit and navigate to the "Video generator" section from the left-hand menu. Easily access AI avatars by clicking "Avatars" in the popular tools section. Quickly filter avatars by gender, age, scene, and more to find the perfect match. Incidentally, AI avatars can also be added based on product links and uploaded media. You can edit the avatar, voice, and script under the "setting" or leave the details to be edited later once the video is generated.

Step 2: Add narration

Once you have chosen your avatar, click on the "Edit script" option to customize the script sync with the selected avatar. Change languages and script text by choosing the "Language" and "Caption style" below. By clicking "Edit more", you will be presented with a variety of pre-selected voice options from the "Audio" section on the right menu bar. Select a voice that matches the message and vibe you want for your video. Adjust the appearance and frame of avatars in the "settings" by clicking "Avatars."

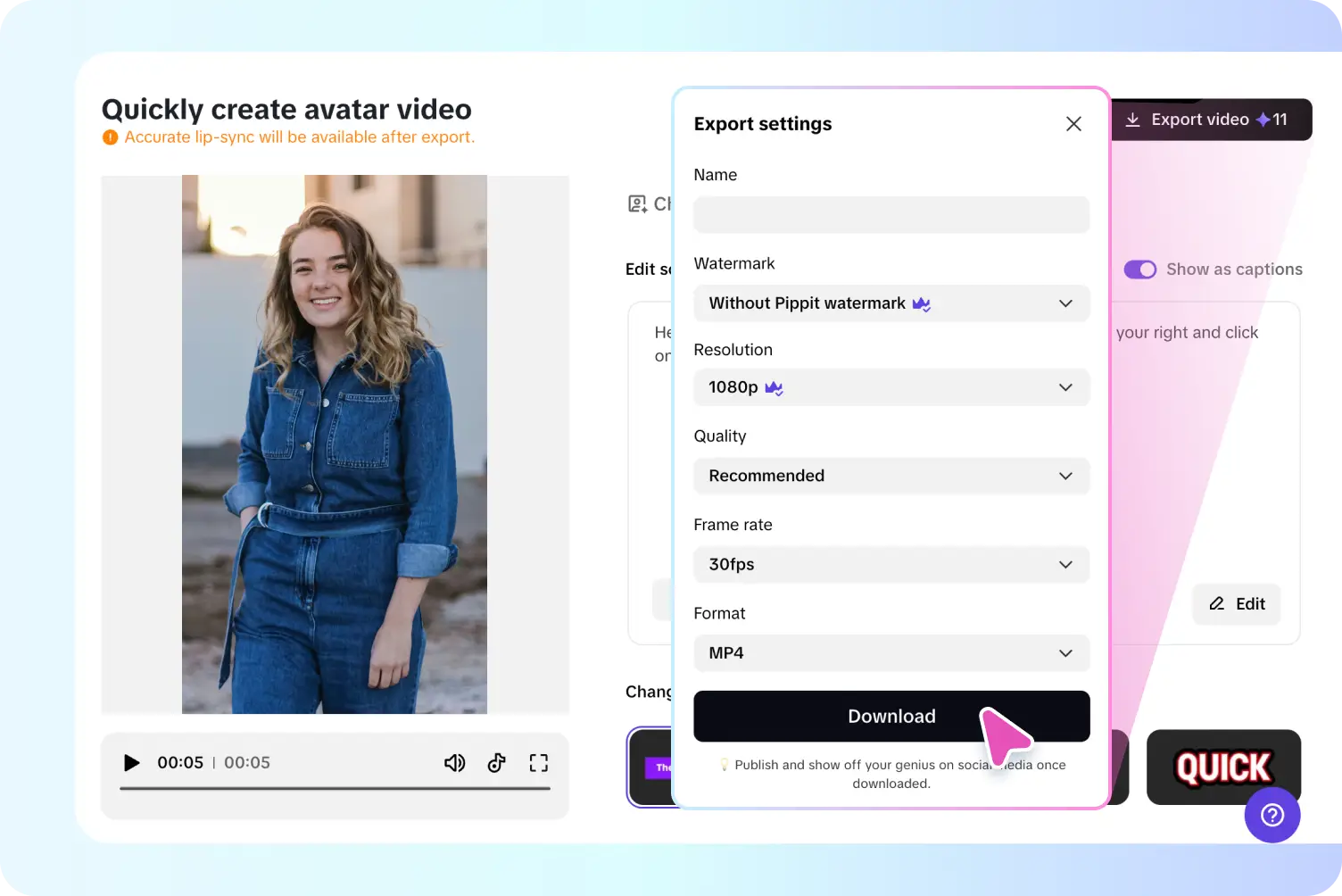

Step 3: Save and share

Once satisfied with the final product, click the "Export" button in the top-right corner. Select your preferred video resolution (e.g., 1080p, 4K) and file format (MP4 or others). You can also adjust the aspect ratio to suit the platform you plan to share it on (e.g., 9:16 for Instagram Reels or TikTok). Finally, either download the video or share it directly to platforms like Instagram, TikTok, or YouTube, or use it on your website or email campaigns to maximize reach and engagement.

FAQs

What loudness target should I use for narration?

For most web videos, aim for about −16 LUFS integrated; for streaming platforms, −14 LUFS is common. Keep true peaks below −1 dBTP and an LRA between 4–12 LU for consistent dialog clarity. To better control music vs. voice, see our audio ducking guide.

Can I see and edit the waveform of my voiceover?

Will loudness normalization squash dynamics or clip audio?

Can I import external voiceovers and background music?

How does narration stay in sync with captions and avatars?

What are the recommended export settings?

Start creating narration-perfect videos online!

Equip your team with text‑to‑video, waveform editing, and LUFS‑standard loudness in one workspace.